Time in Your City Now:

Beijing

Chicago

Abu Dhabi

Advertisement

Addis Ababa

Amman

Amsterdam

Antananarivo

Athens

Auckland

Advertisement

Baghdad

Bangkok

Barcelona

Beirut

Berlin

Bogotá

Boston

Brussels

Buenos Aires

Advertisement

Cairo

Cape Town

Caracas

Damascus

Delhi

Istanbul

London

Dhaka

Dubai

Dublin

Frankfurt

Guangzhou

Hanoi

Havana

Helsinki

Hong Kong

Lagos

Honolulu

Jakarta

Karachi

Kathmandu

Kinshasa

Kuala Lumpur

Kyiv

Las Vegas

Lima

Los Angeles

Moscow

Luanda

Madrid

Manila

Mecca

Mexico City

Mumbai

New York

Paris

Miami

Milan

New Delhi

Nuuk

What's Time Now?

When you encounter something you can't decide on, the Answer Book Online can provide you with a solution. Simply click on the Answer Book to quickly get an answer.

Each time you click on the Answer Book, it will give you an answer. Although the answer may not fully match your question or expectations, it can serve as a way of self-reflection, helping you think and solve problems from different perspectives.

We hope that the Answer Book can bring you inspiration and enjoyment in life, whether you are contemplating a question, making a decision, or simply using it for entertainment.

Each time you click on the Answer Book, it will give you an answer. Although the answer may not fully match your question or expectations, it can serve as a way of self-reflection, helping you think and solve problems from different perspectives.

We hope that the Answer Book can bring you inspiration and enjoyment in life, whether you are contemplating a question, making a decision, or simply using it for entertainment.

How to use Time Now?

When you encounter something you can't decide on, the Answer Book Online can provide you with a solution. Simply click on the Answer Book to quickly get an answer.

Each time you click on the Answer Book, it will give you an answer. Although the answer may not fully match your question or expectations,

Each time you click on the Answer Book, it will give you an answer. Although the answer may not fully match your question or expectations,

Advertisement

Something may help

9 Time Management Tips to Benefit Your Life

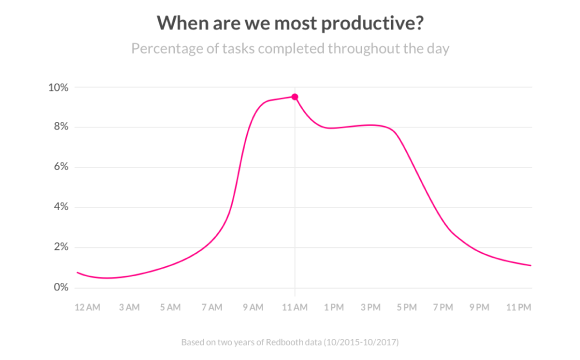

There is a term called the "busyness trap."It means that in modern society, everyone is constantly on the go, busy but not knowing how to break free from it.

Read more

The Ultimate Guide to Overcoming Jet Lag

The pain of overcoming jet lag is something you, who are looking at your phone right now, have surely expe rienced. Today, Alice wants to chat with everyone...

Read more

How to Properly Plan Your Day?

In our fast-paced lives, time is like gold, with every second being incredibly precious. We all have 24 hours, but why do some people manage to accomplish...

Read more

How to Create a Daily Self-Discipline Plan on a Single Sheet of Paper: 7 Dai...

The 24 hours in a day will pass regardless, but the difference between people lies in their attitude towards time...

Read more